Lately, I've been arguing that preregistering focal predictions and pre-analysis plans can provide useful tools that enable scientists to more effectively and efficiently calibrate their confidence in theory and study results, respectively, thereby facilitating progress in science. But not everyone agrees. Recently, I had the opportunity to debate the question of whether preregistration would speed or slow progress in science with Rich Shiffrin, a professor in the Department of Psychological and Brain Sciences at Indiana

University who comes to these issues with a very smart, thoughtful, and different perspective from the one I hold. I wanted to share the discussion with you (with his permission), in case you'd like to join us in thinking through some of the complexities of these issues.

RS: Having run a number of colloquia and symposia on

reproducibility, and running one at Psychonomics this fall (and having published

in PNAS a paper

addressing this issue), I read your editorial with some

interest. Although not objecting to anyone who chooses to pre-register a study,

I would not like to see it in general use, because I think it would slow

progress in science. There are many reasons for my views, and they won’t fit in

an email. But some are related to the theme of your editorial and the

reply, and to the title of my upcoming symposia: “Should statistics govern the

practice of science, or science govern the practice of statistics?” It is my

feeling that the pre-supposition you make and Nosek also makes is in error

concerning the way science works, and has always worked. For example, you list

two views of pre-registration:

i) Have these data influenced my

theoretical prediction? This question is relevant when researchers want to test

existing theory: Rationally speaking, we should only adjust our confidence in a

theory in response to evidence that was not itself used to construct the

theoretical prediction in question (3). Preregistering theoretical predictions can help researchers

distinguish clearly between using evidence to inform versus test theory (3, 5, 6).

ii) Have these data influenced my

choice of statistical test (and/or other dataset-construction/analysis

decisions)? This question is relevant when researchers want to know the type I

error rate of statistical tests: Flexibility in researcher decisions can

inflate the risk of false positives (7, 8). Preregistration of analysis plans can help researchers

distinguish clearly between data-dependent analyses (which can be interesting

but may have unknown type I error) and data-independent analyses (for

which P values can be interpreted as diagnostic about the

likelihood of a result; refs. 1 and 9).

I argue and continue to argue that

science is almost always post-hoc: That science is driven by data and by

hypotheses, by models and theories we derive once we see the data, and that

progress is generally made when we see data we do not anticipate. What your

points i) and ii) pre-suppose is that

there is a problem with basing our inferences on the data after we see the

data, and with developing our theories based on the data we see. But this is

the way science operates. Of course scientists should admit that this is the

case, rather than pretend after the fact in their publication that they had a

theory in advance or that they anticipated observation of a new pattern of

data. And of course doing science in this post-hoc fashion leads to selection

effects, biases, distortions, and opens the possibility for various forms of

abuse. Thus good judgment by scientists is essential. But if science is to

progress, we have to endure and live with such problems. Scientists are and should

be skeptical and do not and should not accept published results and theories as

valid or ‘true’. Rather, reports with data and theory are pointers toward

further research, and occasionally the additional research proves the first

reports are important and move science forward.

AL: Hi Rich, Thanks for writing. I think the space between our positions is

much smaller than you think. You quote me saying: "Preregistering

theoretical predictions can help researchers distinguish clearly between using

evidence to inform versus test theory" and " Preregistration of

analysis plans can help researchers distinguish clearly between data-dependent

analyses (which can be interesting but may have unknown type I error) and

data-independent analyses (for which P values can be interpreted as diagnostic

about the likelihood of a result)." And then you say: "Of course

scientists should admit that [basing our inferences and theories on the data

after we see it]...is the case, rather than pretend after the fact in their

publication that they had a theory in advance or that they anticipated

observation of a new pattern of data."

These positions are

not in conflict. We are both saying that it's important for scientists to

acknowledge when they are using data to inform theory, as well as when they are

using data to inform analytic decisions. The first statement of mine that you

quote says only that scientists should distinguish clearly between using data

to inform versus test theory. I agree with you that it's extremely important

and common and useful for scientists to use data to inform theory (and I wish

I'd had the space to say that explicitly in the PNAS piece--I was limited to

500 words and tried to do as much as I could in a very tight space). The second

statement of mine that you quote does explicitly acknowledge that

data-dependent analyses can be interesting (and again, I wish I'd had the space

to add that data-dependent analyses are often an important and necessary part

of science).

So, I do not presuppose that

"there is a problem with basing our inferences on the data after we see

the data, and develop our theories based on the data we see" -- I only

presuppose that there is a problem with not clearly acknowledging when

we do either or both of these things. The two elements of preregistration that

I describe can help researchers (a) know and (b) transparently acknowledge when

they are using data to inform their theoretical prediction and/or analytic

decisions, respectively.

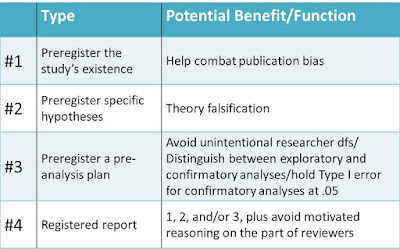

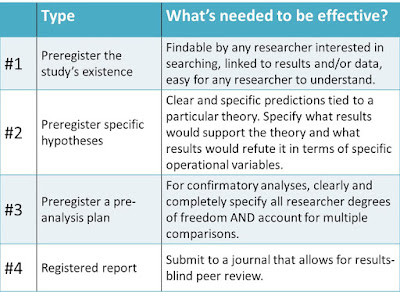

Alternatively, if you don't have the

goal of testing theory and/or constraining Type I error, you can simply state

that clearly and explicitly in a paper. No need to preregister as long as

you're up front on this point. But IF you have the goal of testing a

theoretical prediction, preregistering that prediction can be a useful tool to

help achieve that goal. And IF you have the goal to constrain Type I error, a

carefully specified pre-analysis plan can be a useful tool to help achieve that

goal.

Your position seems to be that most

scientists don't have and shouldn't have those goals. (Please tell me if that's

not a fair characterization.) I think some scientists DO have those goals and

that there are many contexts in which one or the other (or both) goals are

appropriate and help to advance science. So we perhaps disagree about how

common and important these goals are. But I think we are very much in agreement

that using data to inform theory is crucial for advancing science, that it's

very common, and that researchers should not pretend they are theory-testing

when they are in fact theory-building.

RS: Thanks for

responding. I guess we mostly agree, although space in the editorial did not

allow that to be entirely clear.

However, you say:

“But IF you have the goal of testing

a theoretical prediction, preregistering that prediction can be a useful tool

to help achieve that goal. And IF you have the goal to constrain Type I error,

a carefully specified pre-analysis plan can be a useful tool to help achieve

that goal.”

I think we have somewhat different

views here, and the difference may depend on one’s philosophy of science. The

“IF” may be in question. This may be related to the title of several symposia I

am running: “Should statistics govern the practice of science or science govern

the practice of statistics?”

- Scientists do run experiments to test theories. But that is

superficial and the reality is more complex: We know all theories are

wrong, and the real goal of ‘testing’ is to find ways to improve theory,

which often happens by discovering new and unexpected results during the

process of testing. This is seldom said up front or even after the fact.

Of course not all scientists realize that this is the real goal…

Mostly this perspective applies to exploratory science. When one wants to apply scientific results, which happens in health and engineering contexts fairly often, then the goal shifts because the aim is often to discover which of two (wrong) theories will be best for the application. One can admit before or after the fact that this is the goal, but in most cases it is obvious that this is the case from the context. There are cases in social psychology where the goal is an application, but I think the great majority of research is more exploratory in character, with potential or actual applications a bit further in the future.

- The goal of restraining Type 1 error? Here we get into a

statistical and scientific domain that is too long for an email. Perhaps

some people have such a goal, but I think this is a poor goal for many

reasons, having to do with poor statistical practice, a failure to

consider a balance of goals, the need for exploration, and perhaps most

important, the need for publication of promising results many of which may

not pan out, but a few of which drive science forward.

AL: I think you're exactly right about

where our difference in opinion lies, and that it may depend on one's

philosophy of science. And I often have the slightly uncomfortable feeling,

when I'm having these kinds of debates, that we're to some extent just rehashing

debates and discussions that philosophers of science have been having for

decades.

Having said that, let me continue on blithely arguing with you in case it leads anywhere useful. :)

Having said that, let me continue on blithely arguing with you in case it leads anywhere useful. :)

You say: "We know all theories are wrong, and the real

goal of ‘testing’ is to find ways to improve theory, which often happens by

discovering new and unexpected results during the process of testing." I

agree with this characterization. But I think we can in many cases identify

ways to improve theory more quickly when we are clear about the distinction

between using results to inform vs. test theory, and when we are clear about

the distinction between results that we can have a relatively high degree of

confidence in (because they were based on data-independent analyses) and

results that we should view more tentatively (because they were based on

data-dependent analyses).

For example, if I am messy about the distinction between

informing vs. testing theory, I might run a bunch of studies, fit my theory to

the results, but then think of and report those results as "testing and

supporting" the theory. That leads me to be too confident in my theory.

When I see new evidence that challenges my theory, it may take me longer to

realize my theory needs improvement because I have this erroneous idea that it

has already received lots of support. I would be more nimble in improving my

theory if I kept better track of when a study actually tests it and when a

study helps me construct it.

Meanwhile, if I am messy about the distinction between

data-independent and data-dependent analyses, I may infer that my study results

are stronger than they actually are. If I think I have very strong evidence for

a particular effect -- as opposed to suggestive but weaker evidence -- I will

be too confident in this effect, and therefore slower to question it when

conflicting evidence emerges.

To me, all of this boils down to the

simple idea that preregistering predictions and analysis plans can -- if we do

it carefully -- help us to more appropriately calibrate our confidence in our

theories and our confidence in the strength of evidence provided by a given

study. I do not think preregistration is useful in every context, but I do

think it can be useful in many contexts and that it's worth considering as a

tool to boost the informational value of our research.

RS: All science starts

with data and then scientists invent theories to explain the data. A good

scientist does not stop there but then explores, tests, and generalizes the new

ideas. Sometimes the data comes from ‘expensive’ studies that are not easy to

pursue, and then the good scientist will report the data and the proposed

theory or hypotheses, but not pretend the ideas were known a priori.

AL: I definitely agree with this!

RS: Let me add a PS: I repeat that I would not object to anyone

wanting to pre-register a study, But I don’t think this is the best way to

accomplish the goals you profess, and I certainly would not want to see any

publication outlet require pre-registration, and I certainly would not like to

see pre-registation become the default model of scientific practice.

AL: Very clear -- thank you! Do you want me to link to your PNAS article for anyone who wants to read more about

your position on these issues, or would there be a better place to direct them?

RS: Yes. By the way, if you have any reactions to the PNAS article and want to convey them to me, I’d be glad to hear them.

RS: Yes. By the way, if you have any reactions to the PNAS article and want to convey them to me, I’d be glad to hear them.

AL: I think

it's brilliant and I especially like the tree metaphor and the point that the

existence of many individual failures doesn't mean that science is failing. I

think one place where we might differ is in how we see irreproducible reports—you

describe them as buds on the tree that don't end up sprouting, but I think

sometimes they sprout and grow and use up a lot of resources that would be

better spent fueling growth elsewhere. I think this happens through a

combination of (a) incentive structures that push scientists to portray more confidence

in their theories and data than they should actually have and (b) publication

bias that allows through data supporting an initial finding while filtering out

data that would call it into question and allow us to nip the line of research

in the metaphorical bud. In other words, I think scientists are often

insufficiently skeptical about (a.k.a. too confident in) the buds on the tree.

I see preregistration as a tool that can in many contexts help address this

problem by enabling researchers to better calibrate their confidence in their

own and others' data. (Part of the reason that I think this is that

preregistration has helped my lab do this—we now work with a much more

calibrated sense of which findings we can really trust and which are more

tentative...we still explore and find all sorts of cool unexpected things, but

we have a better sense of how confident or tentative we should be about any

given finding.)

Meanwhile, I think you worry that preregistration will hamper the natural flourishing of the science tree (a totally reasonable concern) and you think that researchers should and perhaps already do view buds with appropriate levels of skepticism (I agree that they should view individual findings with skepticism but I don't think they typically do).

Meanwhile, I think you worry that preregistration will hamper the natural flourishing of the science tree (a totally reasonable concern) and you think that researchers should and perhaps already do view buds with appropriate levels of skepticism (I agree that they should view individual findings with skepticism but I don't think they typically do).

RS: I think the history

of science shows science has always wasted and still wastes lots of resources

exploring dead ends. The question is whether one can reduce this waste without

slowing progress. No one knows, or knows how to carry out a controlled

experiment to tell. What we do know is progress is now occurring extremely

rapidly, so we best be hesitant to fix the system without knowing what might be

the (unintended, perhaps) consequences.

I see no danger in very small steps

to improve matters, but don’t want to see large changes, such as demands by

journals for pre-registration.